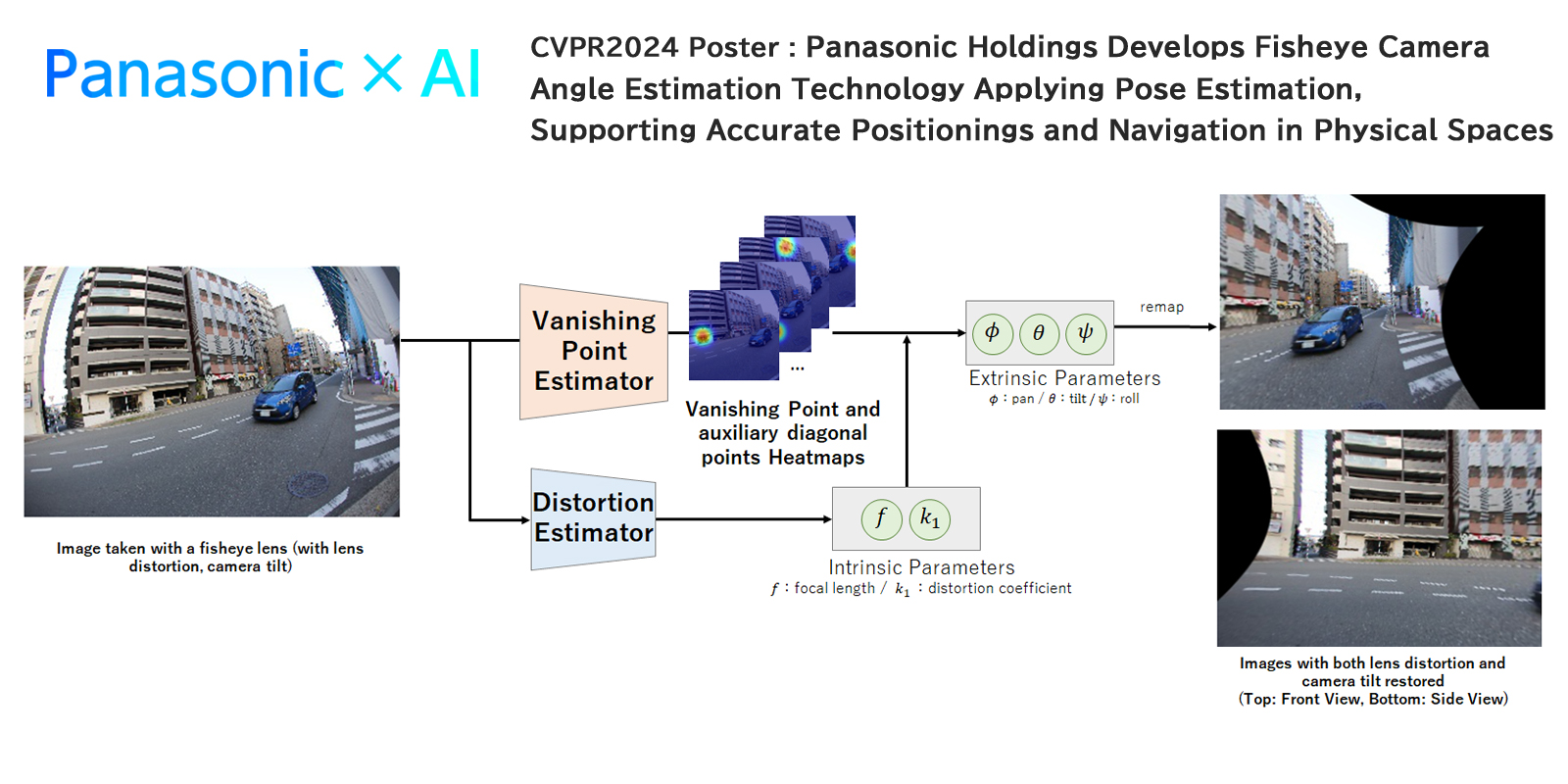

Image-based camera angle estimation is desirable for accurate positioning and navigation using low-cost miniaturized devices for cars, drones, and robots. However, city scene images, including many objects, prevent calibration methods from keying in on ground and vertical directions for camera angle estimation. Estimating camera angles from a single image is difficult even under the Manhattan world assumption. Conventional calibration methods estimated camera angles based on many vanishing points (VPs) that have specific appearances at infinity. Vanishing points have six directions, both ends of X-, Y-, and Z-axes, along three-dimensional orthogonal axes. In addition to these VPs, the method proposes eight more points, called auxiliary diagonal points (ADPs), directing to either 45° or −45° from each axis of X-, Y-, and Z-axes. These ADPs improve the accuracy and robustness of camera angle estimation because the information for AI training is increased by ADPs, which can be regarded as the same as VPs.

Additionally, the proposed method uses heatmaps to robustly estimate VPs in scenes that contain few artificial objects, whereas conventional methods use arc detection. This heatmap is widely used for accurate and robust estimation in computer vision tasks, especially pose estimation and skeletal detection. Following heatmaps, proposed neural networks estimate the probability of VPs in each pixel to determine the VP image coordinates based on areas with a high probability of being VPs. By contrast, conventional methods directly estimate these VP coordinates. The results of the proposed method using heatmaps show that VPs and ADPs were detected accurately in Figure 1. Final camera angles are introduced on the basis of combinations of VPs, ADPs, and lens distortion. This lens distortion is estimated by technology presented in 2022*2.

Figure 2 shows the qualitative results obtained on the synthetic fisheye images from panoramic image datasets. Vertical and horizontal reference lines are indicated to visualize rotations and distortion. Conventional method results had inclined reference lines against the ground-truth cross in Figure 2(a), expressing substantial angle and lens distortion errors. By contrast, the new method developed by Panasonic Holdings achieved accurate angle estimation, showing the cross of the cyan line and magenta or yellow lines in Figure 2(b).

The method can address problematic conditions where city images contain few artificial objects. In particular, this method accurately estimates the angle from city images in which street trees dominate the images. Experimental results demonstrated the effectiveness of the proposed method on large-scale datasets and with off-the-shelf cameras at the world’s highest accuracy*3.

link